@CITY - Odometry

Odometry in urban environments

Funding: Federal Ministry for Economic Affairs and Energy

Website: @CITY

Project period: 2019-2021

Cooperation partner: Continental AG

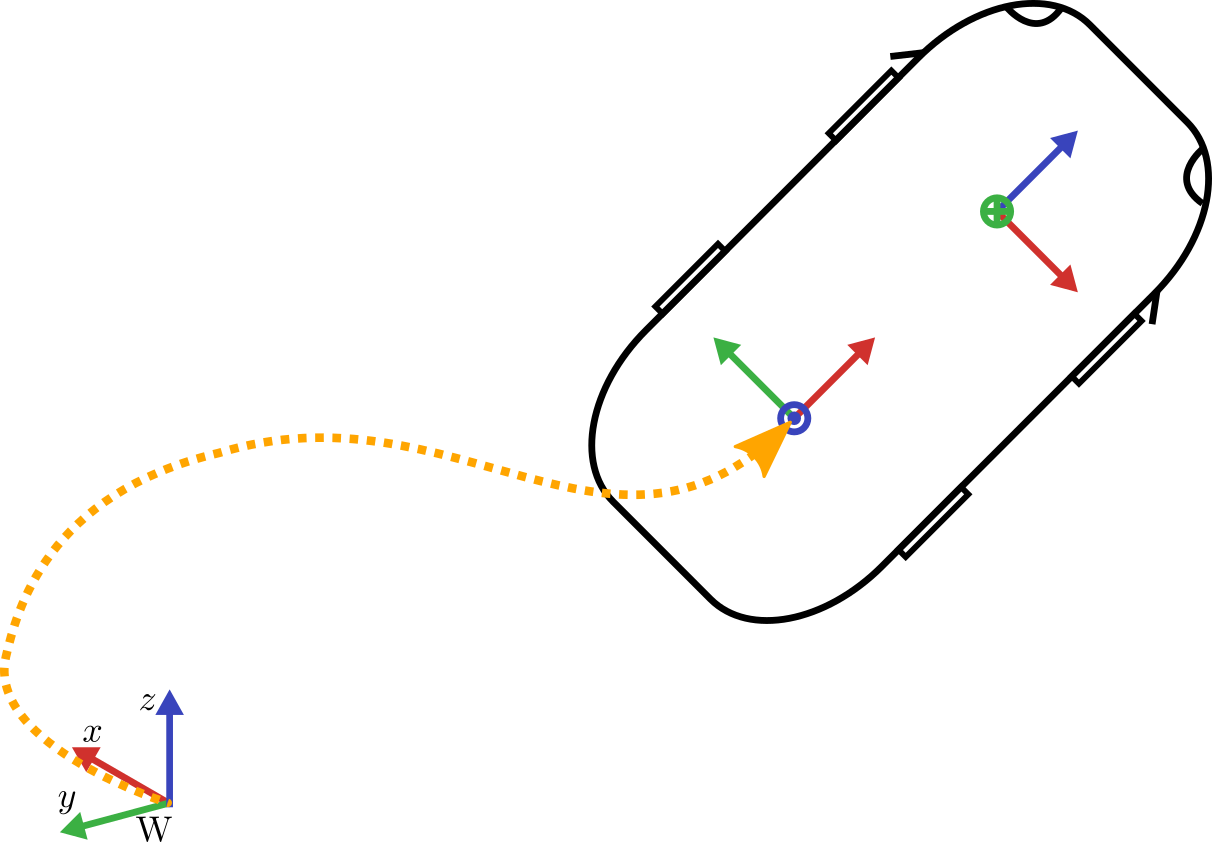

A crucial part of autonomous driving is the precise determination of the vehicle’s odometry. In contrast to the absolute localization via GNSS-methods, the odometry only calculates the vehicle’s relative motion with respect to the starting point. The position and orientation as well as the velocity are used in several algorithms of autonomous driving. In this project, different methods are investigated to achieve a high-frequency and jump-free odometry via a multi-sensor-fusion. A special focus is on the usage of the inertial measurement unit (IMU) and the image stream of a camera.

The final odometry algorithm uses the IMU and the camera in a so called loosely coupled fashion. In addition measurements of the steering wheel and vehicle speed are integrated by means of certain motion models. All the sensor information is fused in an Unscented Kalman Filter (UKF) to output a final odometry result.

Publikationen:

Filter publications: | |

|---|---|

| 2022 | |

| [3] | Visual-Inertial Odometry aided by Speed and Steering Angle Measurements (), In 25th International Conference on Information Fusion (FUSION), IEEE, 2022. |

| 2021 | |

| [2] | Visual-Multi-Sensor Odometry with Application in Autonomous Driving (), In 93rd IEEE Vehicular Technology Conference (VTC2021-Spring), IEEE, 2021. |

| 2020 | |

| [1] | Kalman Filter with Moving Reference for Jump-Free, Multi-Sensor Odometry with Application in Autonomous Driving (), In 23rd International Conference on Information Fusion (FUSION), IEEE, 2020. |

We have 131 guests and no members online